In an era of internet information overload, how can people know what sources to trust? That’s the question that AI and misinformation expert Shyam Sundar has been studying for years.

Sundar is the co-director of the Media Effects Research Lab at Penn State University, which is “dedicated to conducting empirical research on the social and psychological effects of media messages and technology.”

After delivering a talk on artificial intelligence at the University of Hawaiʻi, Sundar sat down with HPR to discuss his research and explain how internet users can train their critical fact-finding instincts.

Interview Highlights

On why users trust misinformation

SHYAM SUNDAR: The real kind of core reason behind this is information overload. So when we are overloaded with information, which is the case as media technologies advance, we are having to economize on our cognitive resources, on our mental resources, and so as a result, we are all sort of cognitive misers, and we are not going to do the due diligence in checking out all the information and sources and so forth. …

This is what we call cognitive heuristics or mental shortcuts. And so, for example, if there's a person in a white lab coat saying something about a medical or health issue, you immediately believe them to be an authority figure. So we call that the authority heuristic. Or when you see a product on Amazon that's got five stars and has a lot of positive ratings, even though it's not by any kind of expert, it's by peers or other shoppers. You tend to have faith in this. This is what we call the bandwagon heuristic. …

We have, these days, the machine heuristic. We think of machines as being objective, accurate, unbiased, secure, cannot gossip behind your back and so forth. And so we trust machines, things that a machine produces as being the highest information quality. And so we have all these different heuristics or mental shortcuts through which we judge in an instant whether something should be trusted, or something should not be trusted.

On the lack of trusted news sources

SUNDAR: I think this makes it very likely that all kinds of unvetted, unverified information will float around much more freely because there are no general interest intermediaries. That's what we call these news organizations. These are basically staffed by people who have training in the art and craft of journalism, and so reporters, especially local news reporters, know how to vet facts, how to double check. You always get two sources to verify something, right? So these are basics of journalism that most people don't stop to think that my friend who posted something on Nextdoor or on Facebook has had that. So you don't stop to think that my friend is not trained in journalism, doesn't know better. Instead, you just go with what they post on your feed, and you kind of say, I heard this, and you kind of go from there. And so it makes it very possible for people to get non-verified and, in fact, non-verifiable information in news deserts.

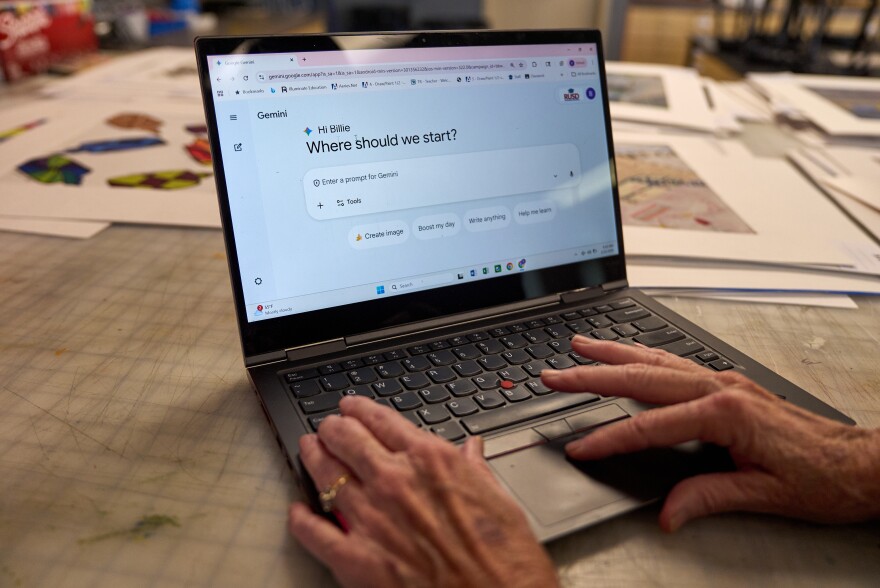

On dangers of AI as an information source

SUNDAR: With AI, a lot of people rely on AI summary. And so AI summarizes news or whatever it is, like, if you go to search engine, even, it actually gives you an AI summary. And there's been just in the last eight to 10 months since it's been there, there's a 62% drop in the number of people who are clicking through the search links. …

So for me, that's really scary, because that's not the search engine part. That is just a summarizing service that sits on top of search engine and can easily be misled. I've had several cases of my students getting the wrong idea about a reading that they're supposed to do for class based on what they thought was an AI summary or an accurate AI summary of that, but in fact, it's not. … And so I see this all the time as a professor, even among my students do that, I can only imagine all the news consumers out there, how much they might be misled with these so-called AI summaries.

More information about Sundar’s research with the Penn State Media Effects Research Lab can be found on their website.

This story aired on The Conversation on April 22, 2026. The Conversation airs weekdays at 11 a.m. Jinwook Lee adapted this story for the web.